Jan 27, 2024

End-to-end network throughput – Addressing Disconnected Scenarios with AWS Snow Family

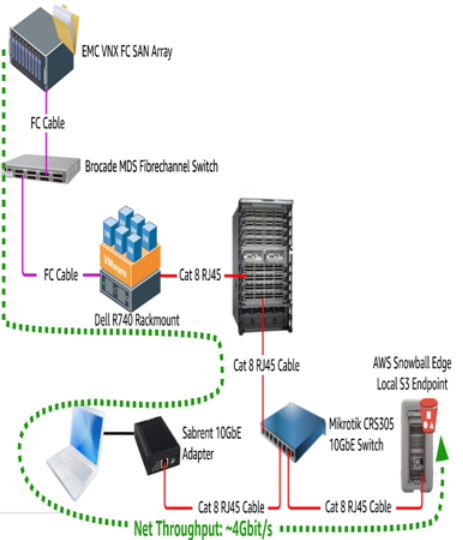

Of course, before starting any migration, even to a local device, one must evaluate all of the physical network links involved end to end. Having the AWS Snowball device connected to a 40 GbE switchport via Quad-Small Form-factor Pluggable (QSFP) won’t do much good if an upstream network link operates at a single gigabit:

Figure 4.3 – A full end-to-end throughput path

Additionally, there can be choke points on backend Storage Area Network (SAN) fabrics, disk arrays, Network-Attached Storage (NAS) devices, or virtualization software somewhere in the middle. In Figure 4.3, for example, the data being copied ultimately resides inside Virtual Machine Disk (VMDK) files on an aging SAN array attached via Fibre Channel (FC) to a server running VMware ESXi.

From the laptop’s perspective, the data is being copied over Common Internet File System (CIFS) from one of the VMware VMs, but in reality, there is a virtualization layer and yet another layer of networking behind that. If, for whatever reason, that SAN array’s controller or disk group could only push 4 Gbit/s to the VMware host, it simply doesn’t matter that all components of the “normal” network support 10 Gbit/s.

Data loader workstation resources

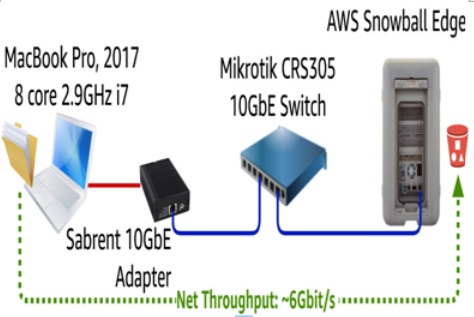

When transferring data to an AWS Snowball Edge device, it is important to note that the throughput achieved is highly dependent upon the available CPU resources of the machine doing the transfer.

Figure 4.4 – AWS Snowball Edge device loading from a laptop

In Figure 4.4, we can see that a reasonably powerful laptop with 8 CPU cores can transfer around 6 Gbit/s, even though there are effectively 10 Gbit/s available end to end on the network. Using a more powerful machine, particularly one with more CPU cores, we would expect the net throughput to rise.

More Details