Aug 19, 2024

DNIs – Addressing Disconnected Scenarios with AWS Snow Family

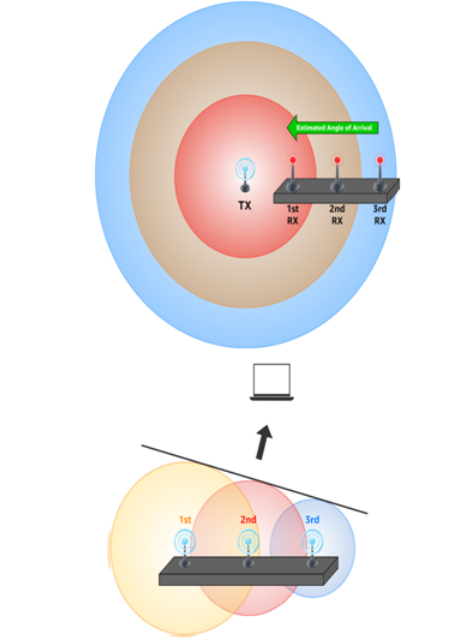

DNIs were introduced to AWS Snow Family devices to support advanced network use cases. DNIs provide layer 2 network access without any translation or filtering, enabling features such as multicast streams, transitive routing, and load balancing. This direct access enhances network performance and allows for customized network configurations.

DNIs support VLAN tags, enabling network segmentation and isolation within the Snow Family device. Additionally, the MAC address can be customized for each DNI, providing further flexibility in network configuration:

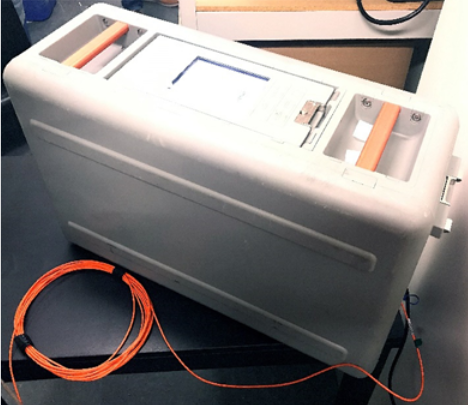

Figure 4.18 – AWS Snowball Edge device with one DNI

DNIs and security groups

It’s important to note that traffic on DNIs is not protected by security groups, so additional security measures need to be implemented at the application or network level.

Snowball Edge devices support DNIs on all types of physical Ethernet ports, with each port capable of accommodating up to seven DNIs. For example, RJ45 port #1 can have seven DNIs, with four DNIs mapped to one EC2 instance and three DNIs mapped to another instance. RJ45 port #2 could simultaneously accommodate an additional seven DNIs for other EC2 instances.

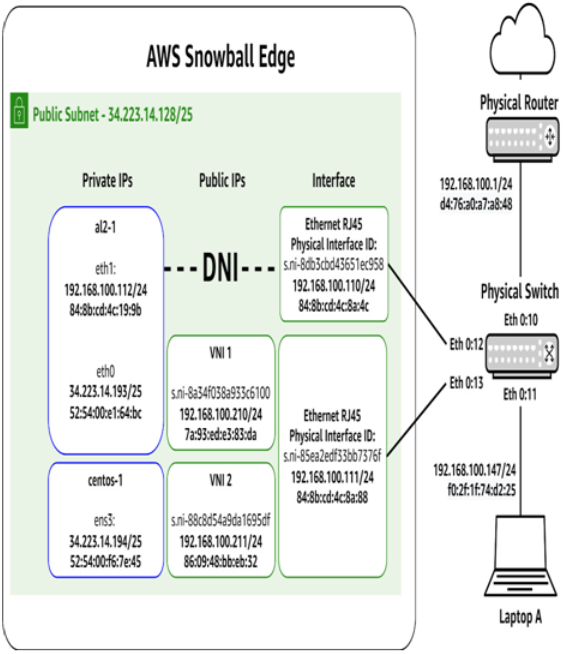

Note that the Storage Optimized variant of AWS Snowball Edge does not support DNIs:

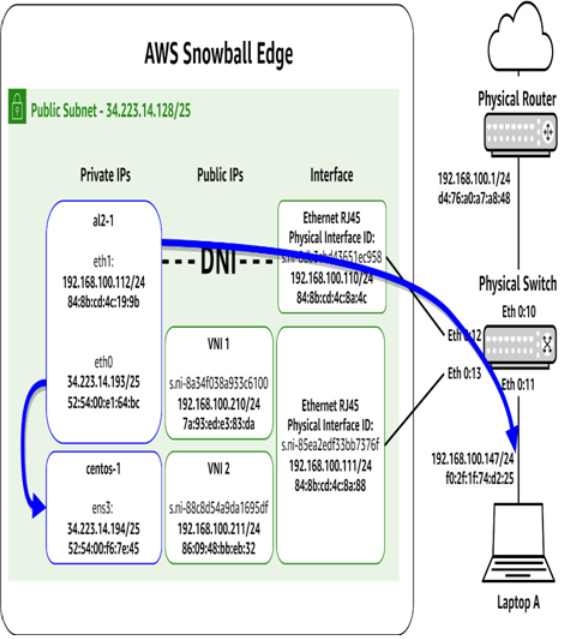

Figure 4.19 – AWS Snowball Edge network flows with DNIs

Looking at Figure 4.19, we can see that al2-1 has two Ethernet ports configured inside Linux. One is on the typical 34.223.14.128/25 subnet, but the other is directly on the 192.168.100.0/24 RFC 1918 space. A configuration such as this is the only time an interface on an EC2 instance on an AWS Snow Family device should be configured for any subnet but 34.223.14.128/25.

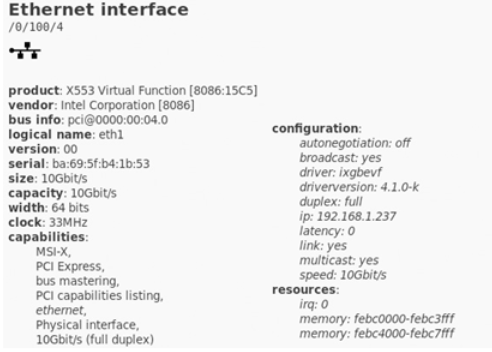

Figure 4.20 shows what a DNI looks like from the perspective of the EC2 instance that has one attached:

Figure 4.20 – DNI details under Amazon Linux 2

Storage allocation

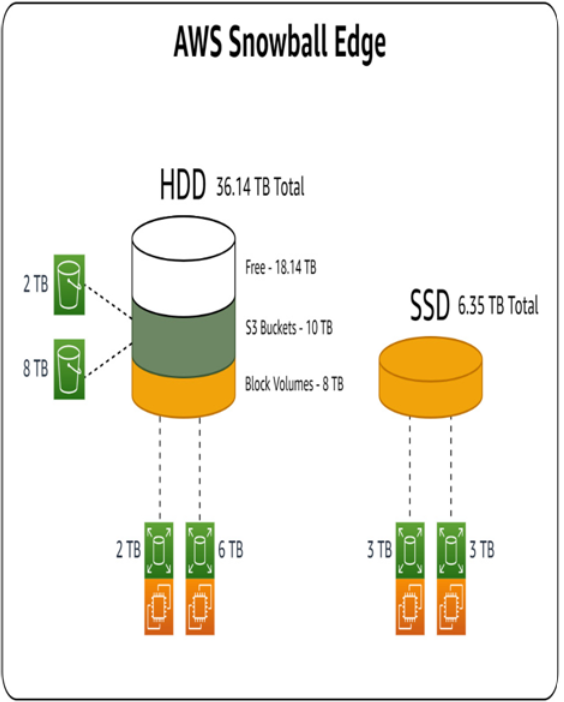

All AWS Snowball Edge device variants work the same way with respect to storage allocation. Object or file storage can draw from the device’s HDD storage capacity, while block volumes used by EC2 instances can be drawn from either the device’s HDD or SDD capacity. Figure 4.21 shows an example of this:

Figure 4.21 – Storage allocation on AWS Snowball Edge

S3 buckets on a device can be thought of as being thin-provisioned in the sense that they start out consuming 0 bytes, and as objects are added, they only take the amount needed for those objects from the HDD capacity.

Block volumes for EC2 instances, on the other hand, can be thought of as thick-provisioned. When the volume is created, a capacity is specified, and it is immediately removed from the HDD pool for any other use.

More Details